위키 구독하기

Share wiki

Bookmark

Inflectiv

0%

Inflectiv

Inflectiv는 비정형 정보를 인공 지능 애플리케이션에 사용하기 위해 토큰화된 구조적 데이터 세트로 변환하도록 설계된 데이터 인프라 플랫폼입니다. 이 프로젝트는 데이터 기여자가 자신의 지식을 수익화하고 AI 개발자가 검증된 고품질 데이터에 액세스할 수 있는 마켓플레이스를 구축하는 것을 목표로 합니다. [1] [2]

개요

Inflectiv는 PDF, 표준 운영 절차(SOP) 및 학술 연구와 같은 형식으로 귀중한 정보가 사일로화되어 대규모 언어 모델(LLM) 및 AI 에이전트에 액세스할 수 없게 되는 "갇힌 지식" 문제를 해결하기 위해 개발되었습니다. 이 프로젝트는 구조화된 데이터 부족으로 인해 신뢰할 수 없는 결과와 실패한 구현이 발생하는 AI 부문의 과제를 해결합니다. Inflectiv의 프로세스에는 문서 또는 전문 지식을 업로드하고, 이를 기본 토큰 $INAI로 지원되는 구조화된 데이터 자산으로 변환하고, 워크플로 및 애플리케이션에 배포하는 것이 포함됩니다. 지식을 기여하는 사용자는 시간이 지남에 따라 활용도에 대한 보상을 받습니다. [2] [5]

이 플랫폼은 깨끗하고 도메인별 데이터가 필요한 AI 개발자, 전문 지식을 수익화하려는 학술 기관 및 연구원, 내부 데이터를 사용하여 AI 구현을 확장하려는 기업, 온체인 및 오프체인 정보를 관리하는 Web3 프로젝트를 포함한 다양한 사용자 기반을 위해 설계되었습니다. 이 프로젝트는 10만 달러의 자금을 확보했다고 보고했으며 플랫폼에서 500개 이상의 데이터 세트를 사용할 수 있다고 밝혔습니다. 이 시스템은 사용자가 프로그래밍 기술 없이도 데이터를 처리할 수 있도록 하는 "0코드" 솔루션으로 의도되었습니다. [1]

제품

Inflectiv의 플랫폼은 데이터 생태계를 만드는 여러 핵심 제품으로 구성됩니다. 이러한 도구는 원시 정보에서 수익성 있는 자산에 이르기까지 데이터 변환의 전체 수명 주기를 용이하게 합니다. 주요 제품으로는 데이터 처리를 위한 웹 애플리케이션과 Sui 네트워크에 구축된 개념 증명 애플리케이션이 있습니다.

플랫폼의 제품군은 세 가지 주요 구성 요소로 나뉩니다.

- 데이터 세트 엔진: 데이터 기여자가 문서 및 연구 논문과 같은 소스에서 원시 정보를 업로드할 수 있는 도구 세트입니다. 이 엔진은 이 데이터를 토큰화 및 라이선스 준비를 위해 지식 그래프로 알려진 구성 가능하고 쿼리 가능한 자산으로 구조화하는 데 도움이 됩니다.

- 데이터 교환(DDEX): 기여자가 구조화된 데이터 세트를 생성, 토큰화 및 나열할 수 있는 마켓플레이스입니다. 또한 후원자가 새로운 데이터 세트 출시를 지원하여 데이터 자산에 대한 커뮤니티 중심 시장을 조성하는 것을 목표로 합니다.

- 개발자 도구: 개발자가 플랫폼의 구조화된 데이터 세트를 애플리케이션, AI 에이전트 및 데이터 파이프라인에 통합할 수 있도록 하는 API 및 SDK 모음입니다. 이러한 도구는 주요 AI 프레임워크 및 Web3 프로토콜과의 호환성을 위해 설계되었습니다. [1]

생태계

Inflectiv 생태계는 데이터 생성자, 큐레이터 및 소비자를 자체 강화 주기로 연결하도록 설계되었습니다. 이 모델은 프로젝트에서 "플라이휠"이라고 설명하며 지식 생성자와 AI 빌더라는 두 가지 주요 사용자 그룹을 포함합니다. 연구원, 학술 기관, 기업 및 Web3 프로젝트와 같은 지식 생성자는 데이터 세트 엔진을 사용하여 사일로화된 데이터를 플랫폼에 기여합니다. [2]

사용 가능한 데이터 세트의 양과 품질이 증가함에 따라 플랫폼은 데이터 소비자 인 AI 빌더에게 더 가치가 있습니다. 이러한 개발자 및 기업은 개발자 도구를 사용하여 구조화된 데이터에 액세스하고 이를 AI 모델 및 애플리케이션에 통합합니다. 이 사용량에서 생성된 수익은 지식 생성자에게 보상하는 데 사용되며, 이는 더 많은 고품질 데이터의 기여를 장려하여 생태계의 성장을 영속화합니다. 후원자 및 커뮤니티 구성원은 데이터 교환에서 데이터 세트를 자금 지원, 거래 및 큐레이팅하여 참여할 수도 있습니다. [1] [2]

사용 사례

- AI 개발: AI 빌더에게 더 정확한 모델을 훈련하고 AI 환각 발생을 줄이기 위해 깨끗하고 구조화되고 도메인별 데이터에 대한 액세스를 제공합니다.

- 지식 수익화: 연구원, 학계 및 기관이 자신의 전문 지식과 연구 결과를 구조화된 데이터 자산으로 토큰화하고 판매할 수 있도록 합니다.

- 엔터프라이즈 AI 확장: 기업이 광범위한 프롬프트 엔지니어링 없이도 SOP 및 보고서와 같은 내부 사일로화된 데이터를 활용하여 AI 애플리케이션을 확장할 수 있도록 지원합니다.

- Web3 데이터 관리: Web3 프로젝트 및 DAO에 온체인 및 오프체인 데이터를 구조화하고 활용하여 시장 정보를 생성하기 위한 프레임워크를 제공합니다. [1]

토큰 경제

Inflectiv는 생태계를 위해 $INAI로 지정된 기본 유틸리티 토큰을 도입할 계획입니다. 이 프로젝트는 2025년 4분기에 토큰 생성 이벤트(TGE)를 예정했습니다. [2]

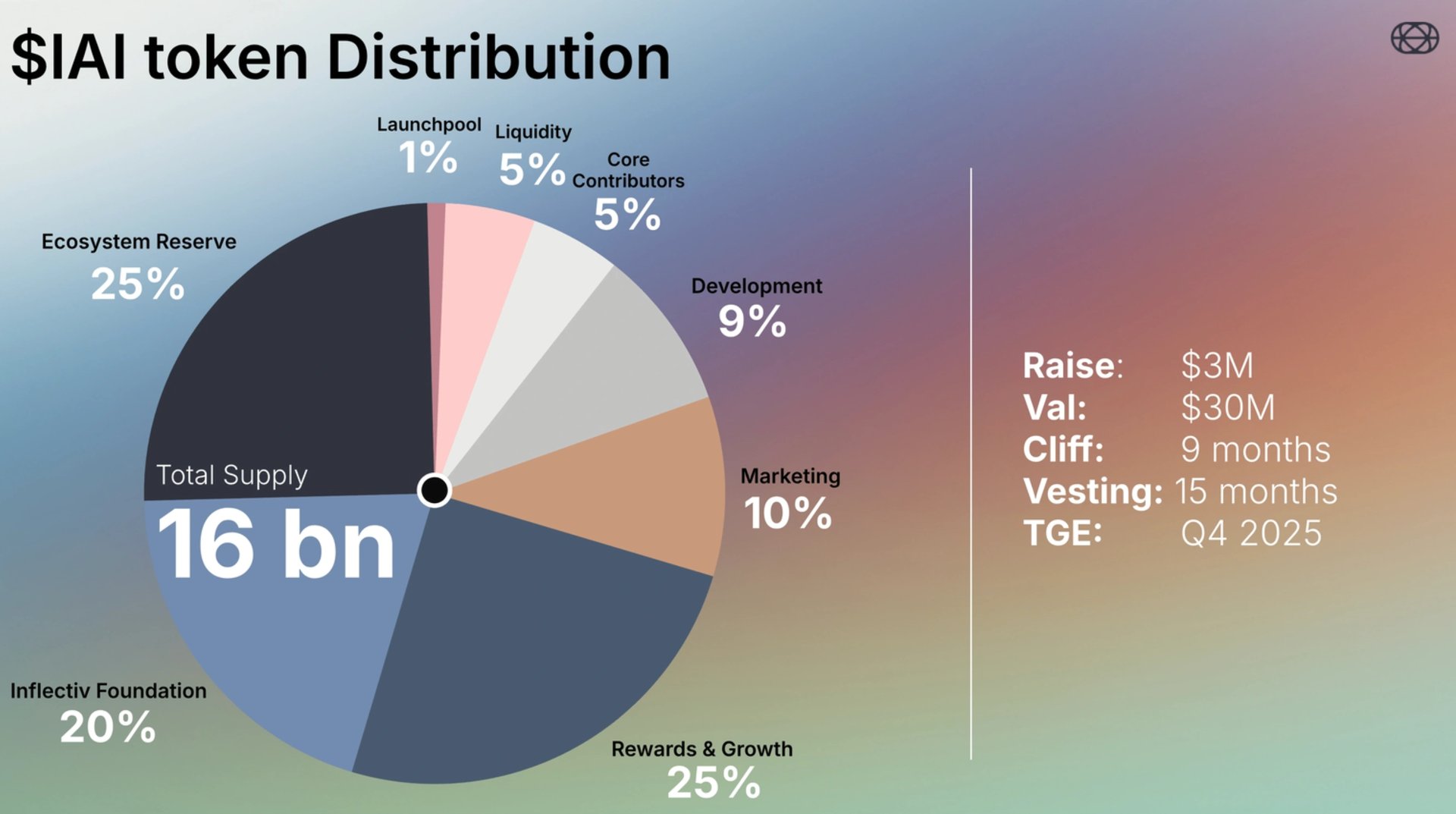

$INAI 토큰의 총 공급량은 160억 개로 설정됩니다. 배포는 다음과 같이 구성됩니다.

- 보상 및 성장: 25%

- 생태계 준비금: 25%

- Inflectiv 재단: 20%

- 마케팅: 10%

- 개발: 9%

- 핵심 기여자: 5%

- 유동성: 5%

- 런치풀: 1%

경제적 측면에서 Inflectiv는 3천만 달러의 가치로 3백만 달러를 모금했다고 보고했습니다. 토큰 릴리스 조건에는 9개월의 절벽 기간과 15개월의 베스팅 기간이 포함되며, 토큰 생성 이벤트(TGE)는 2025년 4분기로 예정되어 있습니다. [3]

토큰 유틸리티

- 수익화 및 액세스: 이 토큰은 개발자와 기업이 데이터 세트에 대한 액세스 비용을 지불하는 데 사용되는 교환 매체로 사용됩니다. 또한 기여자가 생성하고 업로드한 데이터 세트를 수익화하는 데 사용됩니다.

- 크리에이터 보상: $INAI는 플랫폼에 대한 기여에 대한 보상으로 데이터 세트 생성자, 큐레이터 및 유효성 검사자에게 배포됩니다.

- 추적성: 생태계 내에서 데이터 자산의 출처와 사용량을 추적하는 온체인 추적성 메커니즘으로 작동합니다.

- 스테이킹: 이 토큰은 프로토콜 내에서 스테이킹 요구 사항에 사용됩니다.

- 소각 메커니즘: 토큰 경제 모델에는 토큰 공급을 관리하는 데 도움이 되는 소각 메커니즘이 포함될 것으로 예상됩니다. [2]

파트너십

Inflectiv는 플랫폼과 생태계를 구축하기 위해 Web3 및 클라우드 컴퓨팅 부문의 여러 조직과 통합 및 파트너십을 발표했습니다. 이 프로젝트는 또한 공개되지 않은 대학, DAO 및 엔터프라이즈 팀과 함께 파일럿을 운영하고 있으며 Web3 공간 내에서 15개 이상의 파트너십을 확보했다고 밝혔습니다.

잘못된 내용이 있나요?